Systems project — what the simulator will be capable of: A shared-memory C++

program that steps a 2D mesh representing a simplified processor floorplan forward in time: each

timestep applies explicit finite-difference–style thermal diffusion, then updates cell geometry and

neighbor conductances to capture thermo-elastic feedback. The implementation will expose tunable

material/power maps, thread count, and scheduling so we can measure parallel behavior on real lab

machines.

Hoped performance (systems): We aim to demonstrate clear multi-core speedups and to

quantify how much coupling and mesh dynamics cost relative to a static baseline. Specific numeric

targets and justification appear under Plan to achieve below; stretch targets under

Hope to achieve.

PLAN TO ACHIEVE

The following items are what we believe we must complete for a successful project

and the grade we expect. They are ordered from simulation core through evaluation.

1. 2D mesh simulator

Cell characteristics:

- Temperature (T): Current temperature of the cell.

-

Heat capacity (c): How much energy it takes to raise the cell’s temperature—can

vary by type (ALU, cache, interconnect).

-

Power generation (p): Amount of heat the cell produces per timestep, reflecting

activity level.

-

Thermal conductivity (k): Rate at which the cell exchanges heat with each neighbor.

Can be directional if desired (e.g., vertical vs horizontal conduction).

-

Neighbors (Ni): List of adjacent cells or a fixed stencil (4-way or

8-way).

Customizable aspects:

- Different cell types with distinct c, p, k values.

-

Cell type tagging: ALU, cache, memory, interconnect → different thermal/mechanical parameters.

- Non-uniform mesh spacing (some cells larger to represent bigger blocks).

- Boundary conditions: fixed temperature, heat sink, or insulated edges.

2. Coupled deformation

Goal: Model thermomechanical feedback: cells expand/contract depending on

temperature, affecting thermal coupling.

Cell characteristics:

-

Coefficient of thermal expansion (α): Determines how much the cell expands per unit

temperature rise.

-

Geometry / size: Width/height or area of the cell (affects neighbor distances and

thus conductance).

- Strain / displacement (ε): Optional: track cumulative deformation.

-

Neighbor conductances (kij): Adjust dynamically based on

expansion/contraction.

Customizable aspects:

- Different expansion coefficients per material type.

- Adjustable method of conductance scaling (linear, nonlinear, capped, or weighted by distance).

- Option to track cumulative strain for “reliability-aware” simulations.

3. Parallel multi-threaded implementation

Goal: Accelerate simulation by updating many cells simultaneously.

Considerations per cell:

- Updates for T and strain are independent within a timestep (based on previous step’s values).

-

Neighbor access pattern: for conductance update, a cell may read adjacent cells’ strain; avoid race

conditions via double-buffering or per-thread local copies.

-

Workload weight: Some cells produce more heat, which causes larger temperature changes, which means

more compute in some areas.

Customizable aspects:

- Choice of threading library: OpenMP, pthreads, or hybrid.

- Thread assignment strategy: static blocks vs. dynamic work queue.

- Adjustable number of threads for scaling experiments.

4. Performance evaluation

Goal: Measure how efficiently the simulation scales across multiple threads and

explore the effects of different parallelization strategies. The focus is on achieving high speedup and

maintaining good load balance, rather than on detailed thermal correctness.

Metrics:

-

Speedup: wall-clock time with one thread divided by wall-clock time with N threads

(T1 / TN).

-

Parallel efficiency: How close observed scaling is to ideal linear speedup.

-

Load balance: Track per-thread execution time or work distribution to identify

imbalance caused by hotspots or irregular mesh layouts.

Customizable aspects (for achievable experiments):

- Mesh size: e.g., 100×100, 500×500 cells

- Power patterns: uniform, a few hotspots, or random loads

- Number of threads and thread scheduling strategies (static vs. simple dynamic partitioning)

Analysis approach:

- Break down execution time into compute, synchronization, and geometry update phases.

- Compare speedup across thread counts to evaluate how close the simulation gets to ideal scaling.

-

Identify major bottlenecks, such as memory contention, synchronization barriers, or uneven

workloads.

- Present graphs showing speedup vs. thread count, per-thread load distribution, and time breakdowns.

Potential solutions:

-

Overlap temperature update and geometry update using double buffering and fine-grained

synchronization, so some threads start computing the next step while others finish current mesh

updates.

-

Experiment with non-blocking barriers or pipelined updates to reduce waiting time between timesteps.

Target outcomes and justification (plan)

We may not know final numbers until implementation lands, but we commit to concrete,

measurable goals and explain why they are realistic:

-

Multi-core speedup: Show at least roughly 4× wall-clock speedup on

8 threads vs 1 thread for the full coupled timestep (thermal update + geometry

update + conductance refresh) on a mesh of at least 256×256 cells for a

uniform-power baseline on GHC or PSC. Justification: Per-timestep cell updates are

embarrassingly parallel with only barriers between phases; we expect synchronization—not neighbor

arithmetic—to cap scaling first, which we will measure explicitly.

-

Scaling curve: Report speedup (or time-to-solution) for thread counts in

{1, 2, 4, 8} (and higher when hardware allows), with fixed total timesteps and mesh

size stated in the writeup.

-

Load imbalance signal: For at least one hotspot-style power map, report per-thread

timing or work proxies so we can discuss whether static partitioning leaves cores idle.

Justification: Hotspots are inherent to the workload; documenting imbalance supports claims

about scheduling choices.

HOPE TO ACHIEVE (if ahead of schedule)

Extra goals if the project goes well and we get ahead of schedule. Each includes why it might be

reachable.

1. Interactive / live simulation

-

Allow the simulator to run in near real-time, updating heatmaps and strain dynamically as power

inputs change.

- Provide a live visualization of the mesh deforming or the heat flow changing over time.

Why it might be achievable: If the core timestep is already fast on moderate meshes, throttling

visualization resolution and timestep count can keep an interactive loop within tens of milliseconds per

frame on lab CPUs.

2. Customizable inputs & scenarios

-

Write a program or interface to adjust power profiles on-the-fly, e.g., simulate a hotspot suddenly

powering up.

-

Allow users to set cell material properties, expansion coefficients, or thermal conductances

dynamically.

- Include dynamic load injection to test extreme or non-uniform scenarios.

Why it might be achievable: A simple file- or stdin-driven power trace and a small set of CLI

flags reuse the same engine without rewriting the hot loop.

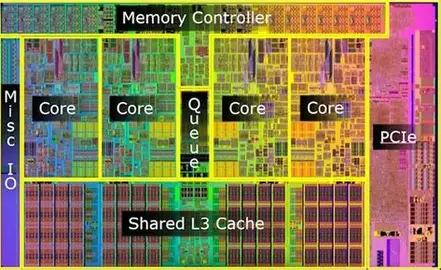

3. Flexible mesh / floorplan import

-

Support importing arbitrary processor floorplans, e.g., from JSON, CSV, or standard CAD formats.

- Map imported floorplans to the mesh, assigning thermal properties to each block automatically.

Why it might be achievable: Parsing a coarse JSON/CSV block list into rectilinear regions is

orthogonal to the timestep kernel and can follow once the mesh data structure stabilizes.

4. Enhanced visualization & output

- Generate animated heatmaps or videos showing thermal propagation and mesh deformation over time.

-

Produce performance dashboards combining heatmaps, strain, and thread utilization for parallel runs.

Why it might be achievable: Dumping per-step PNGs or CSVs from existing fields is mostly I/O

plumbing once the simulation produces stable outputs.

Stretch performance target (hope): If tuning succeeds, we would like to approach

roughly ~6–8× speedup at 8 threads on the same baseline mesh as in our plan—beyond

the planned 4× floor—because reducing barrier overhead or improving SoA layout could recover a

meaningful fraction of serial time. We will only claim this if measurements support it.

If work goes more slowly (contingency)

If we fall behind, we will shrink scope in a defined order so we still ship a

defensible parallel study:

-

First cut: Keep a structured grid only (no unstructured adjacency

rebuilds); deformation only adjusts distances/conductances, not topology.

-

Second cut: Defer dynamic scheduling and advanced load-balancing

experiments; stick to static block partitioning with clear speedup plots.

-

Third cut: Reduce mesh ambition to 128×128 (or similar) for

scaling studies while preserving the static-vs-dynamic overhead comparison on that size.

-

Last resort: Deliver a correct single-threaded coupled simulator

plus a parallel thermal-only path if coupling parallelization slips—but we will

document the gap explicitly in the milestone/final writeup.

Experimental analysis — questions we plan to answer

This is primarily a systems implementation project, but our evaluation is designed to

answer concrete questions about the workload and machine mapping:

-

Where does time go? What fraction of each timestep is thermal update vs geometry vs

synchronization, and how does that split change with thread count?

-

What limits speedup? Do we hit a barrier wall, memory bandwidth from irregular

access after deformation, or insufficient parallelism for small meshes?

-

How much does thermo-elastic coupling cost? How large is the slowdown vs a static

mesh at equal resolution, and does that cost grow with hotspot severity?

-

Does workload heterogeneity create imbalance? Under hotspot power maps, do we

observe skewed per-thread times under static partitioning, and does a simpler dynamic scheme help?